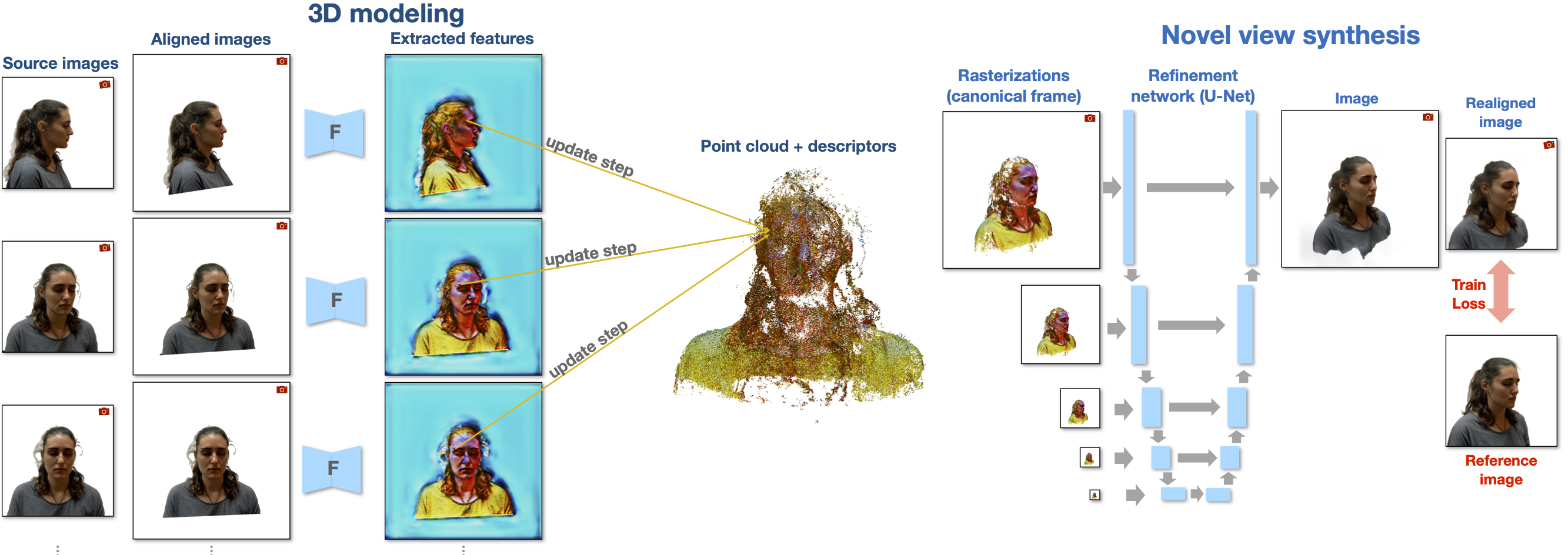

Pipeline overview

We represent the scene as a point cloud with a view-dependent neural descriptor embedded in each point. During 3d modeling stage, we sequentially process each input view (input image alignment and feature extraction) and apply online aggregation to update the neural descriptors of each point (no fitting). During the novel view synthesis stage, we rasterize the point cloud, pass the rasterization result through the rendering network, and post-process it (output image alignment) to get the novel view.